Magento 2 robots.txt file

Probably, your website contains pages or some page elements which you prefer to hide from Google bots and keep non-indexed. Magento 2 admin default settings allow to block unwanted crawling using robots.txt file.

Hiding your site's SID parameters and JavaScript from crawlers may considerably reduce your server resources and prevent your website from content duplication. This procedure is especially appropriate when creating Magento 2 XML sitemap to give the bots instructions on how to index your website.

Use these guidelines to configure robots.txt file in your Magento 2

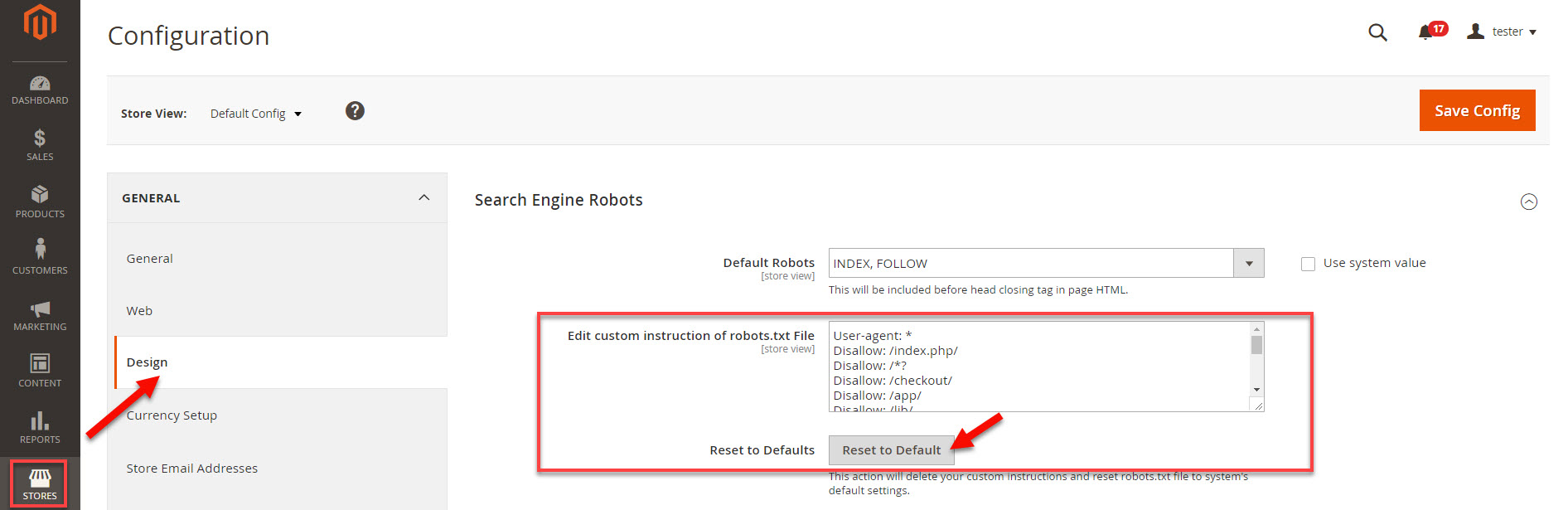

- From Magento admin go to Stores tab and select Configuration under Settings section.

- Under General headline select Design.

- Clicking on Reset to default button will fill the Edit custom instruction of robots.txt field. Here you can modify the instructions for web crawlers.

You can use the following pieces of code to block particular data on your website:

User accounts and checkout process Disallow: /checkout/

Disallow: /onestepcheckout/

Disallow: /customer/

Disallow: /customer/account/

Disallow: /customer/account/login/

Native catalog and search pages Disallow: /catalogsearch/

Disallow: /catalog/product_compare/

Disallow: /catalog/category/view/

Disallow: /catalog/product/view/

CMS directories Disallow: /app/

Disallow: /bin/

Disallow: /dev/

Disallow: /lib/

Disallow: /phpserver/

Disallow: /pub/

Duplicate content Disallow: /tag/

Disallow: /review/

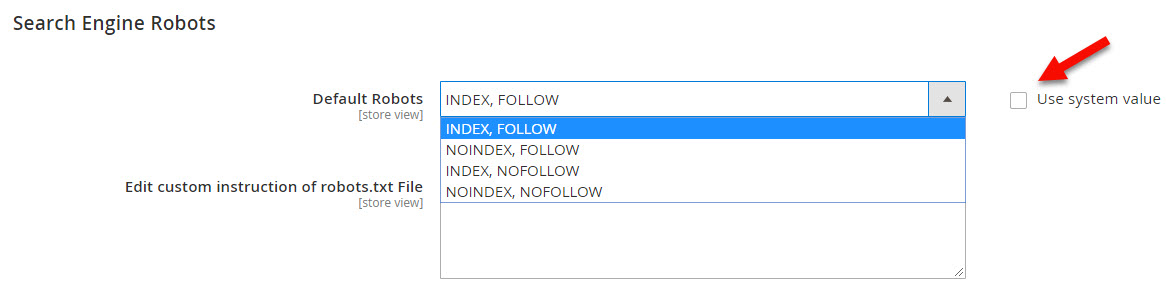

Besides robots.txt file configurations, Magento 2 also allows setting Nofollow and Noindex tags. Let's consider each of the options:

- INDEX, FOLLOW - tells the bots to index your website and track it for changes;

- NOINDEX, FOLLOW - instructs crawlers to check your site for changes but not to index it;

- INDEX, NOFOLLOW - allows search engines to index your website once without tracking it for changes afterwards;

- NOINDEX, NOFOLLOW - forbids web bots to neither index your site or check it for changes.

In order to be able to modify the configurations, uncheck the Use system value checkbox and select a suitable option.

Conclusions

By configuring a robots.txt file and Noindex Nofollow tags you can avoid unwanted indexing of particular parts of your website. This enables you to show Google only most SEO-valuable information and hide unimportant data that can bring your search positions down.

COMMENTS